|

12/17/2023 0 Comments Tesla fp64

One of the clever bits in the Ampere architecture this time around is a new numerical format that is called Tensor Float32, which is a hybrid between single precision FP32 and half precision FP16 and that is distinct from the Bfloat16 format that Google has created for its Tensor Processor Unit (TPU) and that many CPU vendors are adding to their math units because of the advantages it offers in boosting AI throughput. Obviously, 20X is a very big leap – the kind that comes from clever architecture, like the addition of Tensor Cores did for Volta. The FP32 engines on the Ampere GV100 GPU weigh in at a total of 312 teraflops and the integer engines weigh in at 1,248 teraops. On single precision floating point (FP32) machine learning training and eight-bit integer (INT8) machine learning inference, the performance jump from Volta to Ampere is an astounding 20X. We will be doing a detailed architectural dive, of course, but in the meantime, here are the basic feeds and speeds of the device, and it is just absolutely jammed packed with all kinds of compute engines in its 108 streaming multiprocessors (also known as SXMs): But actually, the performance of the raw FP64 units in the Ampere GPU only hits 9.7 teraflops, half the amount running through the Tensor Cores (which did not support 64-bit processing in Volta.) So you might be thinking that the FP64 unit counts scaled with the increase of the transistor density, more or less.

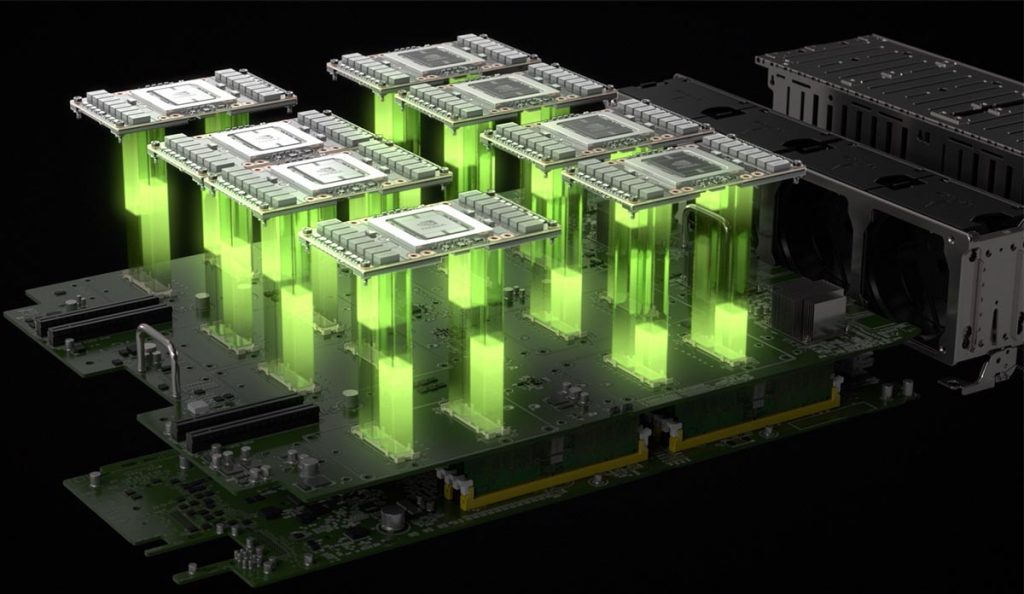

Paresh Kharya, director of product management for datacenter and cloud platforms, said in a prebriefing ahead of the keynote address by Nvidia co-founder and chief executive officer Jensen Huang announcing Ampere that peak FP64 performance for Ampere was 19.5 teraflops (using Tensor Cores), 2.5X larger than for Volta. Ampere has 2.6X as many transistors packed into an area that is 1.4 percent bigger, and what we all want to know is how those transistors were arranged to yield a massive boost in performance.įiguring out the double precision floating point performance boost moving from Volta to Ampere is easy enough. The Volta GP100 from three years ago, etched in 12 nanometer processes, was almost as large at 815 square millimeters with 21.1 billion transistors. This was a ground-breaking chip at the time, and it seems to lack heft by comparison. The “Pascal” GP100 GPU announced in April 2016 was etched with 16 nanometer processes by TSMC, weighed in at 15.3 billion transistors, and had an area of 610 square millimeters. The chip is etched in the 7 nanometer processes of Taiwan Semiconductor Manufacturing Corp, and the device weighs in at 54.2 billion transistors and comes in at a reticle-stretching 826 square millimeters of area. Let’s start with what we know about the Ampere GA100 GPU. The bulk of the computing in this AI workflow is being done by the GPUs, and we will show the dramatic impact of this in a separate story detailing the new Nvidia DGX, HGX, and EGX systems based on the Ampere chips after we go through the technical details we have gathered about the new Ampere GPUs. There are still CPUs in these systems, but they are relegated to handling serial processes in the code and managing large blocks of main memory. And thus, now the massive amount of preprocessing as well as the machine learning training and the machine learning inference can now be done all on the same accelerated platforms. With the Ampere chip, Nvidia has also announced that it has been working with the Spark community to accelerate that in-memory, data analytics platform with GPUs for the past several years, and it is now also ready. Nvidia has created a single GPU that can not only run HPC simulation and modeling workloads considerably faster than Volta, but also converges the new-fangled machine learning inference based on Tensor Cores onto one device. The Ampere chip is the successor not only to the “Volta” GV100 GPU that was used in the Tesla V100 accelerator announced in May 2017 (aimed at both HPC and machine learning training workloads) but, as it turns out, the chip is also the successor to the “Turing” TU102 GPU used in the Tesla T4 accelerator launched in September 2018 (aimed at graphics and machine learning inference workloads). Here is the most important bit, right off the bat. All of the feeds and speeds and architectural twists and tweaks have not yet been divulged, but we will tell you what we know and do a deep architecture dive next week when that data is available. The in-person GPU Technical Conference held annually in San Jose may have been canceled in March thanks to the coronavirus pandemic, but behind the scenes Nvidia kept on pace with the rollout of its much-awaited “Ampere” GA100 GPU, which is finally being unveiled today.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed